I’ve updated the calendar fro the Spring 2023 semester and am pleased that the number of events is approaching pre-pandemic levels. Note, though, that more events require registration, proof of vaccination, and/or a clear covid test.

Philosophy Roulette Live on Twitch.tv

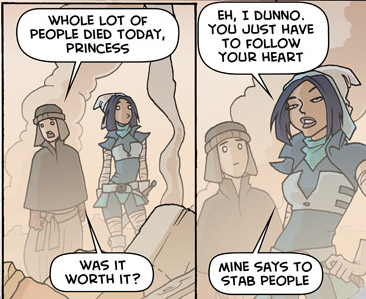

I’m streaming reviews of philosophy papers live on Twitch (my channel here / schedule here). Basically I download a random paper from PhilPapers, and read & comment on it live. Come join me if you want to chat philosophy, read along, criticize my interpretations, or just stave off cabin — non-covid19 — fever. image credit: oglaf

Dialetheia

Good experimental philosophy demonstrating the existence of dialetheia are my journal reviews. image credit: Mark Bryan, Last of the Clowns

Fall 2019 Update

Due to technical reasons — a PHP security issue — the site needed to be updated: the old theme, what governs the look and some functionality, did not work with the latest PHP version. As far as I can tell, this new theme works well and nothing major is broken. However, there may yet be some changes made or errors found. Please let me know of any concerns/ issues.

The 3 Rs of Publishing Philosophy

So you want to publish philosophy? Follow the three Rs! 1. Rhetoric No matter how good your results are or how technically sophisticated your argumentation, if it is done in an obscure way, your paper will not be published. There are at least two, but more usually three or more people that will read your paper when it is sent to a journal. First is the head editor and/or section editor. If they can’t make […]

public philosophy stories 4: Tragedy Ensues

Spring was terrible this year. Disgustingly hot days scattered through miserable cold and wet ones. It was typically miserable this last Monday (30 April) as I approached the Empire State Building walking down 5th. A cold drizzle turned into a cold rain, clearing the sidewalks of tourists only to expose the open air asylum that is the NYC homeless population. Before I could enter the CUNY Grad Center one of these patients barred my path. […]

Paradox of Logical Privilege

Let us assume that logic cleaves the world at its corners. Then everything can be divided into the logically privileged, that which makes up the corners, and the not logically privileged, that which makes up everything else. Where then does the concept of logical privilege fall? If logical privilege is logically privileged, then it describes it as something that is at the corners, and not the content. But then it must describe not have described […]

Happy Possible Worlds Day!

On this day in 1277 Étienne (Stephen) Tempier, bishop of Paris, declared that God could have made worlds other than this one, perhaps the first time anyone publicly argued for possible worlds.

On Public Philosophy 1: Marketing

Quite a few pixels have been burned on the topic of public philosophy lately. Notably the American Philosophical Association recently registered its support for the practice. Carrie Jenkins wrote up an interesting guide and Eric Schliesser then commented on it … via Daily Nous. The thing that strikes me is that no one treats public philosophy as actual philosophy. They basically treat it as marketing for whatever it was they were already doing. Keep in […]

Practical Ontologist 2.0

I’ve made a major update to my site, The Practical Ontologist. Check it out and let me know what you think. Major site updates: Topical Subsections: Metaphysics, Professional, Science, Traditions, Value, & Fun. Incoming posts are classified via Naive Bayes Machine Learning. The categories, save Fun and Professional, are roughly modeled on [PhilPapers’ classifications](https://philpapers.org/browse/all). Fun is a stream of philosophical memes, mainly from Tumblr. Professional contains news about the profession and other meta-philosophical content. RSS […]